Article

Apr 26, 2026

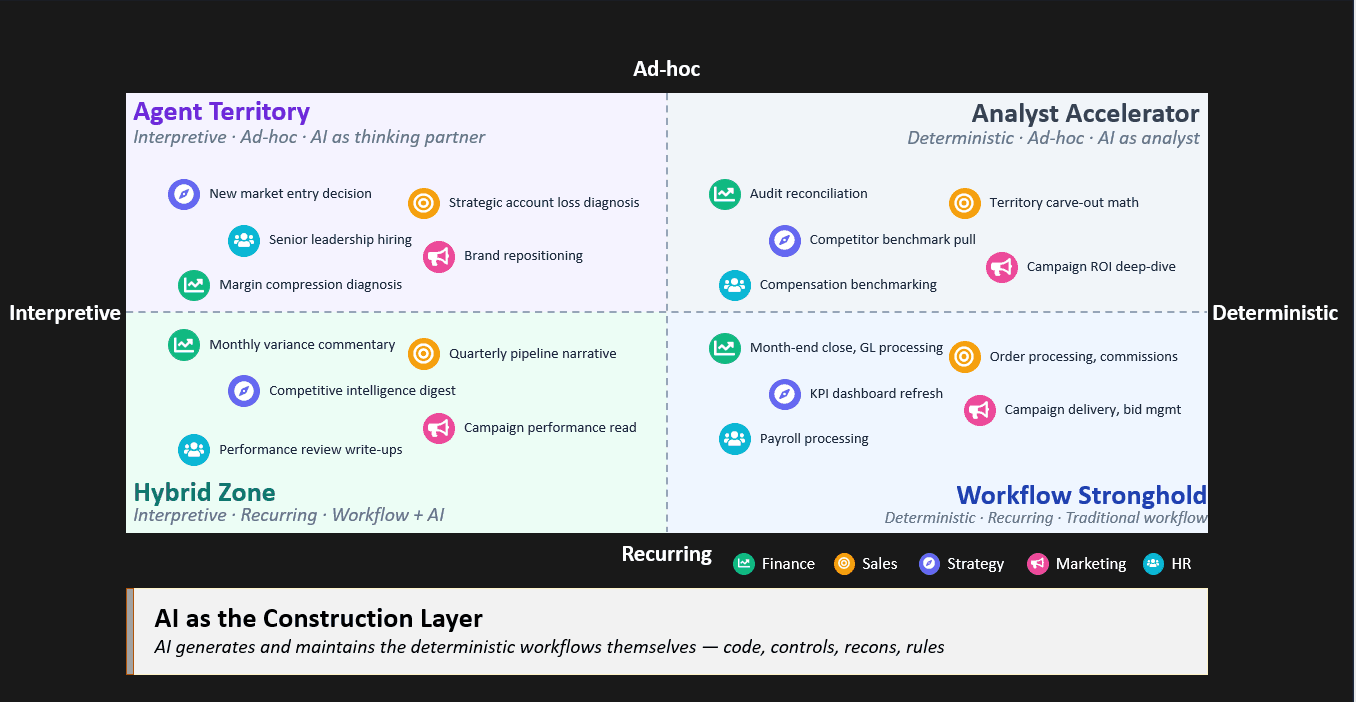

Most enterprise AI spend lands where workflow already wins. The real value sits in interpretive recurring work and in using AI to build the deterministic workflows themselves, not run them.

Most boardroom conversations about AI are stuck on the wrong question. Should we use AI for this - or continue with tradition workflow automation?

The framing assumes the choice is binary. It isn't.

AI is not a single technology you adopt or reject. It is a class of capabilities. Some kinds of work are transformed by it. Others fight it uphill. A third category is being quietly re-priced in ways most leaders haven't yet absorbed.

The right question isn't whether to use AI. It is how to decompose the work, and route each piece to the technology that fits.

Here is the uncomfortable part. Most enterprises today are spending AI dollars on the parts of the business where AI delivers the least incremental value. Not because their leaders are misguided. The opposite. The easiest places to deploy AI are also the worst places to deploy it. Deterministic. Well-documented. Rule-based. Problem Statement where “inputs” and “outputs” are clear. The "before" state is well understood. The "after" state is easy to measure.

But this is exactly where traditional workflow automation already wins — on cost, auditability, and speed.

The real arbitrage is somewhere else.

Two Axes That Determine the Answer

Every task in an enterprise sits somewhere on two dimensions.

Deterministic vs Interpretive

Deterministic: one correct output for a given input. Two competent people are expected to produce identical results. E.g. capital ratio calculations. Journal entries. Credit limit checks.

Interpretive: synthesis and judgment. Two competent people produce different but equally valid outputs as options, scenarios or decision directions. Margin compression analysis. Strategic narrative. Customer churn diagnosis.

Recurring vs Ad-hoc

Recurring: Predictable cadence. Similar input/output structures across instances. E.g. Monthly close. Weekly variance reports. Per-transaction credit checks.

Ad-hoc: Event-triggered. Variable inputs and outputs each time. M&A diligence. New market entry. One-off control investigation.

These two axes give four quadrants. Each one points to a different technology answer.

Quadrant 1 (Q1): Agent Territory (Interpretive + Ad-hoc)

Highest upside for AI. Hardest reliability problem.

Strategy formulation. Leadership hiring. Novel diagnosis.

Best served today by AI as a thinking partner to a senior human, not by an agent left to run autonomously.

Long-horizon ambiguity is where traditional systems fail most often.

Quadrant 2 (Q2): Hybrid Zone (Interpretive + Recurring)

Workflow gives you cadence and audit trail. AI gives you interpretation and narrative.

Monthly business commentary. Performance reviews. Periodic risk reviews.

The most under-invested zone in 2026.

Quadrant 3 (Q3): Workflow Stronghold (Deterministic + Recurring)

Traditional automation continues to win - on cost, determinism, auditability.

Token-based inference is meaningfully more expensive per transaction than compiled code.

Non-determinism injects risk where zero variance is required.

AI rarely beats the incumbent stack on TCO when measured honestly.

Quadrant 4 (Q4): Analyst Accelerator (Deterministic + Ad-hoc)

Building a permanent workflow isn't worth the engineering cost.

The next instance will look different. Current alternative is a senior analyst with a spreadsheet.

AI as analyst wins because there is nothing to amortize.

But the matrix alone hides the most important insight. A business process is not a single task. It is a bundle of sub-tasks, each living in a different quadrant.

The leader's job is not to ask "should this process run on AI or workflow?"

The right question is: how do I decompose this process — and route each piece to the technology that fits? This is where interesting work begins.

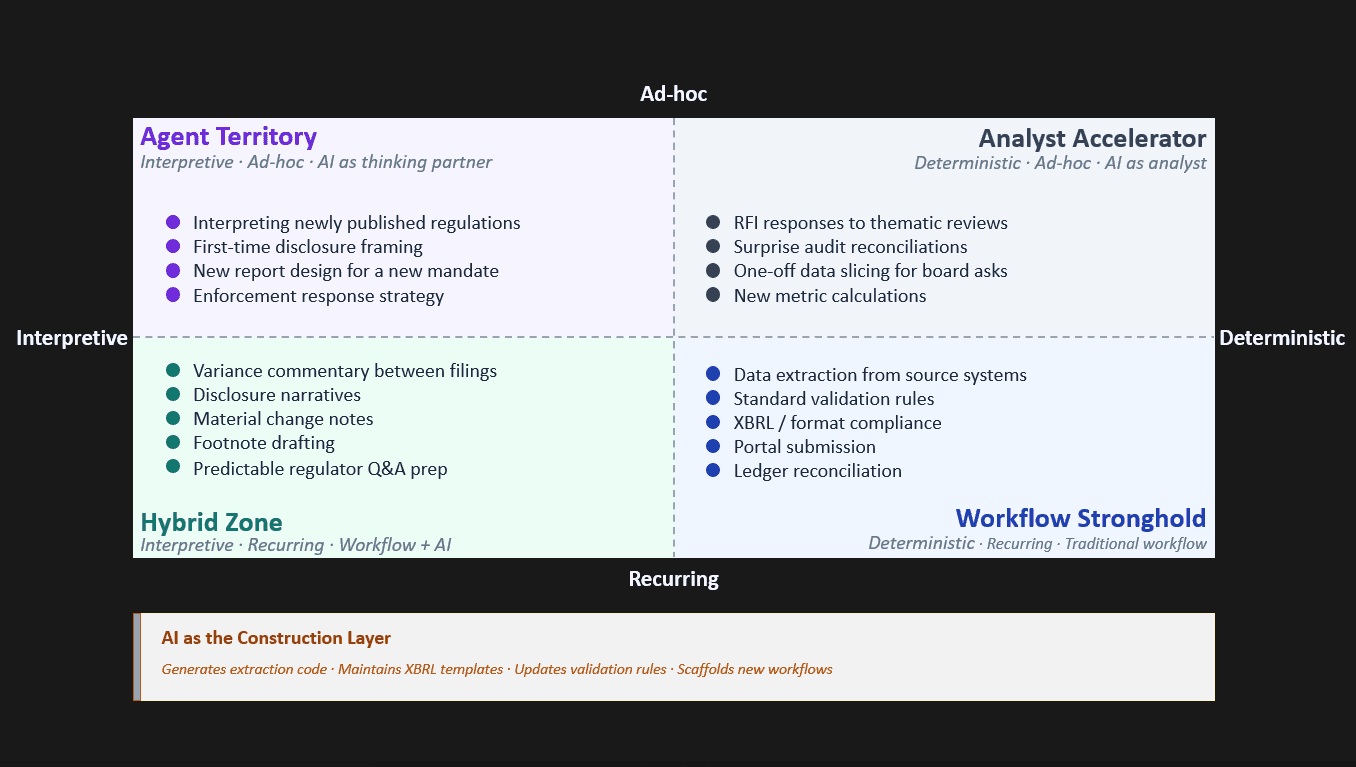

A Working Example: Regulatory Reporting for Banks

Take regulatory reporting. At the function level, it sits squarely in Q3. Every CFO knows this is "workflow territory." Most banks have spent 15+ years automating their reporting stack.

But the function-level placement hides what's actually happening inside the process. A few facts shape where each sub-task really belongs:

The regulator's tolerance for variance is zero. Determinism wins. Workflow stays.

Reporting teams quietly burn the most senior analyst hours on commentary, not computation. Cadence is fixed. Format is standardized. But the interpretation is fresh every cycle.

Workflows can't write the commentary. A human does that — expensively.

Auditability is more nuanced than it looks. Workflow provides a structured trail, but structured rules rarely cover every use case. Interpretive work needs interpretive audit — the reasoning, not just the rule.

One-off regulator queries don't justify permanent workflows. RFIs, thematic review responses, bespoke data pulls — building infrastructure for a single use is irrational. The economics never amortize.

New regulations require fresh thinking, not pattern-matching. First-time disclosures, enforcement responses, and report redesigns can't be solved by retrieving last quarter's template.

Accountability stays human. The correctness of disclosures sits on the shoulders of senior finance and compliance leaders. It cannot be delegated to a model.

Apply these facts, and the matrix follows.

The irony — most enterprises are spending AI dollars trying to push Q3 from workflow to AI. Chasing marginal efficiency on already-optimized processes.

The actual unlock is different.

Use AI to run the Q2 work consuming senior analysts.

Use AI to build and maintain the Q3 workflows themselves.

AI as the Construction Layer

This is the insight most matrices miss.

The deterministic recurring workflows of Q3 don't have to be hand-built anymore.

AI is increasingly capable of generating the workflow code itself.

Writing SQL extractions

Drafting validation logic

Scaffolding XBRL templates

Updating rules when regulations change

The Q3 workflows still run on traditional compute. That is what makes them cheap, deterministic, and auditable. But the engineering (coding) of those workflows shifts. From a multi-quarter project to a multi-week one.

This reframes how AI shows up in the enterprise.

Three roles for AI. One framework. Different economics.

AI as runner — interpretation in production, mostly Q2.

AI as analyst — one-off bespoke work, Q4.

AI as builder — generating and maintaining the workflows everywhere else.

The third role is the one most strategies miss. And it is the one that quietly changes everything.

"Everything will eventually run on AI" is the wrong prediction."

"Everything will eventually be built by AI.”

The Misallocation — and the Real Opportunity

The pattern across most enterprises in 2026 is consistent.

AI dollars flow to the Workflow Stronghold — easiest to scope, lowest perceived risk, easiest to measure. The result is marginal efficiency on already-optimized processes, and a credibility problem when the ROI underwhelms.

Meanwhile, the most expensive human hours sit in the Hybrid Zone — variance commentary, disclosure narratives, performance reviews, periodic risk write-ups. Mostly untouched.

The reason it stays untouched is structural, not strategic.

Agent Territory (Q1) gets the keynote demos.

Workflow Stronghold (Q3 + Part Q2) gets the procurement-friendly efficiency wins.

The Hybrid Zone (Q4) needs both a workflow layer and an AI layer working together — and pure-play vendors on either side don't pitch it cleanly.

So it stays underfunded. Even though it is precisely where human effort, variability, and AI's incremental value are all highest.

The misallocation isn't just wasted spend. It is opportunity cost on the only zone where AI clearly outperforms the status quo.

The ROI Lens Leaders Should Apply

Before deploying AI on any process — four questions.

Decompose first.

What are the sub-tasks? Which quadrant does each belong to? If the answer is "the whole thing is one quadrant," the decomposition isn't deep enough.

Route by economics.

What is the cost-per-output of AI vs workflow vs human at the volume you'll actually run it? Ten executions a year is a different answer from ten million.

Separate build cost from run cost.

Can AI accelerate the build of the workflow even if the run stays on workflow? Often, yes. That changes the cost-benefit math entirely.

Watch for false economy.

Are you reducing real work? Or swapping a stable, cheap, auditable system for an unstable, expensive, harder-to-govern one with similar throughput?

Where This Is Going

The boundary between AI and workflow is set by today's economics — token costs, reliability, governance, tooling. That boundary is moving.

Token costs are falling and will further fall (an order of magnitude in two years).

Reasoning, verification, and AI-build tooling all improving.

But per token pricing is only half the equation.

Tokens consumed per task are inflating faster than tokens are getting cheaper.

Chain-of-thought reasoning models burn multiples of output tokens. Better answers, longer traces.

Vector stores and RAG - every query retrieves more context. Grounding adds overhead by design.

One-shot becoming many-shot - reliability now demands more examples per call.

History logs and agent memory - long-running flows re-send context every turn.

Then comes the memory problem itself. Bigger context windows don’t mean better context.

Models lose coherence over long inputs.

Retrieval gets noisier as context size grows.

The architectural workarounds of indexing, summarization layers, hierarchical retrieval, memory pruning, only add engineering cost, latency, and maintenance

Net effect: per-task cost isn't falling as fast as per-token pricing suggests. It can actually increase substantially and many are privy to it.

Over the next three to five years, more workloads will shift toward AI. Not because of hype. Because the economics and reliability curves will converge — and a sub-task that today fails the cost-of-variance test will pass it.

That is the reason the boundary will move through decomposition, not through wholesale replacement.

The Right Question

The question isn't where can we use AI?

That gets you the same answer everyone else is getting. And the same disappointing ROI.

The question is:

Where does AI fundamentally outperform structured systems?

Where does it not?

What work that humans currently own should move to AI, before everyone else figures it out?

The companies that get this right won't be the ones who adopt AI fastest. They will be the ones who deploy it with precision, aligned to the structure of the work itself, decomposed sub-task by sub-task.